For nearly 75 years, the Centers for Disease Control and Prevention (CDC) has been at the forefront of protecting the United States—and the world—from threats to public health such as infectious and chronic diseases, injuries, workplace hazards, disabilities, and environmental health issues. Not only has it been a leader in assisting with public health challenges, its elite scientists, epidemiologists, researchers, surveillance teams, doctors, and public health advisors have taught local staff members in communities, states, territories, and countries worldwide to carry on its work long after CDC’s public health professionals have packed their equipment and returned home.

From Malaria Control to a Larger Purpose

Organized on July 1, 1946, The Centers for Disease Control evolved from a World War II agency, the Office of Malaria Control in War Areas (MCWA), a program within the US Public Health Service (PHS). Established in 1942, MCWA’s primary job was malaria control and prevention in areas around military bases and industrial sites tasked with production related to World War II. These “war areas” were primarily located in 15 southeastern states, Puerto Rico, the Virgin Islands, and Caribbean areas related to the United States. Once World War II was over, the federal government converted MCWA operations from war-related efforts to addressing more general communicable disease problems that affect the nation as a whole, and MCWA became the Communicable Disease Center (CDC), with headquarters in Atlanta, Georgia. It is fitting that CDC emerged from a wartime effort, because from its inception, it has been waging war against the world’s gravest health threats and medical mysteries.

Buy the Book: Centers for Disease Control (Images of America)

A brief glimpse at the nation’s public health landscape prior to the creation of CDC may offer added appreciation for the agency’s stunning accomplishments over the years.

When the country’s founders formed the United States, the 13 original colonies were primarily seaports on the Atlantic Coast with outlying small towns inland. The colonies had local sanitation laws and were keenly aware of diseases being carried on ships sailing to the New World. Even after the formal creation of the United States, public health was not addressed by the government; the US Constitution makes no mention of it. However, following a yellow fever epidemic in 1798, Pres. John Adams signed the first federal public health law, which created the Marine Hospital Service (MHS) for merchant seamen—a forerunner of the modern PHS. This law imposed a monthly hospital tax of 20¢ that was deducted from the pay of merchant seamen for the care of sick seamen and the building of independently operated Marine hospitals. An amending act to the legislation of 1798 extended MHS benefits to officers and enlisted men of the US Navy. MHS was also responsible for the medical inspection of immigrants, the supervision of national quarantine, and prevention and control of the interstate spread of diseases such as yellow fever, cholera, and smallpox.

Throughout the 18th and 19th centuries, diseases ran rampant due to poor sanitation and the limited availability of doctors. Most family illnesses were treated at home using homemade herbal remedies. Often, if no cause for an epidemic was known, people simply waited until it had run its course. Although “doctors” were officially recognized in 1769, they were only educated to take care of broken bones and to prescribe herbs and hard liquor that would vanquish evil spirits. Few doctors had any formal training; most learned from other physicians in an informal setting.

In 1902, Congress enacted a bill to increase the efficiency and change the name of the Marine Hospital Service to the Public Health and Marine Hospital Service. The law authorized the establishment of specified administrative divisions and, for the first time, designated a bureau of the federal government as an agency in which public health matters could be coordinated. In 1912, it simply became the US Public Health Service, broadening the PHS research program to include “disease of man” and contributing factors such as pollution of navigable streams and information dissemination. By the early 20th century, some progress had been made in treating communicable diseases, but epidemics—such as the plague that hit San Francisco in the early 1900s and the global Spanish influenza epidemic in 1918 and 1919—showed that despite PHS efforts, there was still much to be done to address health emergencies.

When the Centers for Disease Control was created in 1946, the fledgling program had an ambitious agenda, but with a core staff of only 430 and a budget of $1.6 million, it faced formidable challenges. Dr. Joseph Mountin, a visionary leader in the PHS, hoped to expand CDC’s interests to include all communicable diseases and to provide guidance and practical help to all entities associated with the United States. World-class scientists soon began filling CDC’s laboratories, and many states and foreign countries sent staff members to Atlanta for training. Although the new agency was making headway in the prevention and control of malaria, typhus, and yellow fever, Dr. Mountin was not satisfied with this progress and impatiently pushed the staff to do more. He reminded them that CDC was responsible for any communicable disease. To survive, it had to become a center for epidemiology.

You May Also Like: The Indiana Influenza Pandemic of 1918

In 1949, Dr. Alexander Langmuir came to CDC to head the epidemiology division. He quickly organized a disease surveillance system that would ultimately become the cornerstone of CDC. The threat of biological warfare that loomed after the outbreak of the Korean War in 1950 led to the organization of CDC’s Epidemic Intelligence Service (EIS). EIS officers were charged with guarding against ordinary threats to public health while simultaneously watching for new and emerging infectious diseases.

The first class of EIS officers began work in 1951, pledging to go wherever they were needed over the following two years. They quickly became known as “disease detectives.” Using “shoe-leather epidemiology,” they traveled door-to-door in areas suffering from a disease outbreak to gather surveillance data, literally making house calls around the world. There were 23 recruits in the first EIS class: 22 physicians and one sanitary engineer. Today, classes of around 80 EIS officers are given two-year assignments domestically and internationally. Classes are composed of medical doctors, veterinarians, nurses, researchers, dentists, and scientists. In addition to working with CDC public health advisors in global disease “hot spots,” the majority of EIS graduates work with state and local health departments to address a broad spectrum of health challenges including chronic disease, injury prevention, violence, environmental health, occupational safety and health, and maternal and child health, as well as infectious diseases.

Polio & The Asian Flu Pandemic

Two major health crises in the 1950s helped to establish the Centers for Disease Control’s credibility and reputation. A surge of poliomyelitis cases in the 1950s created a nationwide panic as state and local health departments were tasked with administering a national project to vaccinate thousands of children and adults across the country. When polio appeared in children following inoculation with the Salk vaccine, EIS officers helped identify the problem as stemming from a distributor of the vaccine. In 1957, when the United States was faced with the huge Asian flu pandemic, EIS teams gathered data and developed national guidelines for an influenza vaccine. In this era, CDC contributions to coordinating immunization campaigns and involvement in other public health–related projects began to give the nation and the world a glimpse of its real potential.

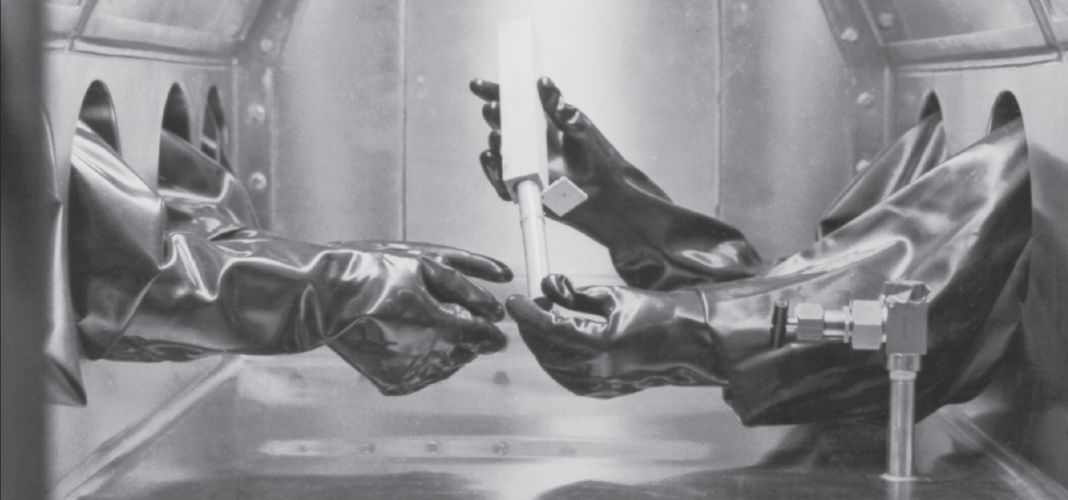

In the late 1950s and 1960s, CDC grew even larger with the addition of the venereal disease program (1957), the tuberculosis program (1960), and the Foreign Quarantine Service (1967)—one of the oldest and most prestigious units of the PHS. In 1961, the National Office of Vital Statistics was moved to Atlanta, and CDC began publishing the Morbidity and Mortality Weekly Report. Each issue of this report contained accurate, timely scientific information about the most recent outbreaks, investigations, and public health recommendations. To this day, this unique publication has never missed its weekly deadline. In the late 1960s, CDC worked closely with the National Aeronautics and Space Administration (NASA) to protect the earth from germs that could potentially be brought back from outer space by returning Apollo astronauts and, conversely, preventing harmful earth germs from being carried into outer space.

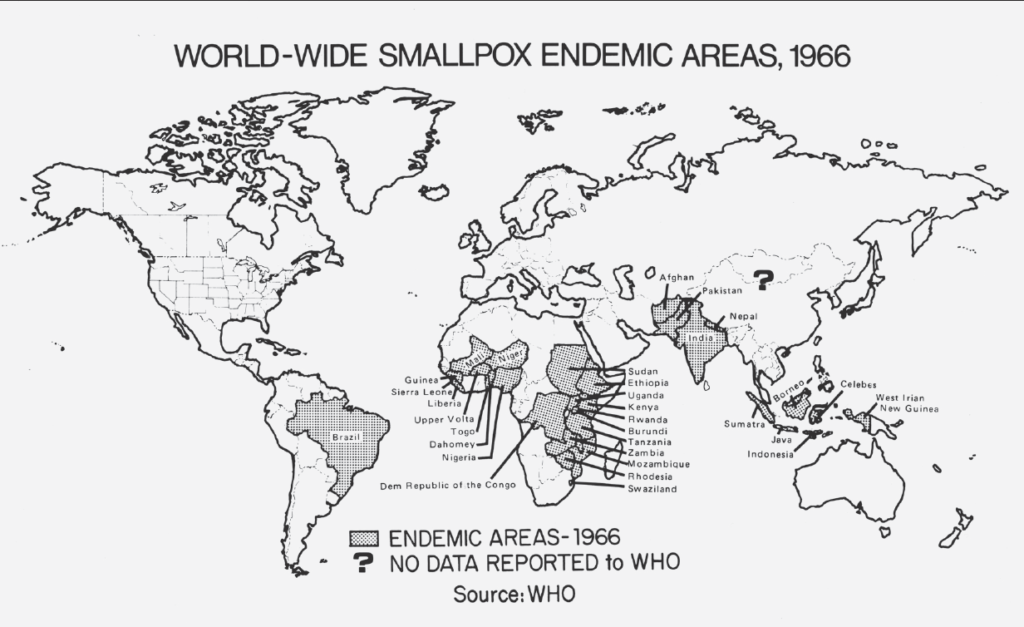

The establishment of the smallpox surveillance unit in 1963 would give CDC one of its greatest achievements in public health: the eradication of smallpox. By refining mass vaccination techniques using the Ped-o-Jet, an instrument refined by the US Army for CDC use, and working in tandem with other global health agencies to create a successful “surveillance and containment” strategy, the smallpox virus was eradicated from the world by 1980.

In 1970, CDC was renamed the Center for Disease Control by the federal government to denote its expanding presence in matters related to public health. During this decade, CDC, while maintaining its role in fighting infectious diseases, was also becoming a principal prevention arm of the federal government. Soon, it became involved in a wide array of public health programs dealing with chronic disease and injury control, family planning, and health-related aspects of human lifestyles and community environments. Efforts to improve occupational environments were given a boost when the National Institute for Occupation Safety and Health (NIOSH) joined the ranks of CDC. Watershed events in the 1970s included the outbreak of the mysterious Legionnaires’ disease and the first outbreak of Ebola in Africa. CDC surveillance and research helped address both emergencies.

During the 1980s, newly emerging infectious diseases would become the focus for many CDC researchers and epidemiologists. AIDS appeared out of nowhere to become a premier health threat; CDC provided leadership for the first task force to address the issue and continues to help in the fight against this deadly disease. CDC also helped with the Atlanta Child Murders investigation in the early 1980s. Known as the “missing and murdered children case,” this tragic crime involved a series or murders committed in Atlanta, Georgia, from the summer of 1979 until the spring of 1981. Over the two-year period, at least 28 African American children, adolescents, and adults were killed. CDC provided assistance by utilizing epidemiological detective work to investigate the risk factors the victims had in common. CDC participation in this investigation led to the creation of CDC’s Violence Epidemiology Branch in 1983, now the Division of Violence Prevention.

The CDC merged the disciplines of health economics and decision science in the 1990s to create a new area of emphasis: prevention effectiveness. Other contributions to worldwide health issues during this decade included participation in the global polio eradication campaign and expanded research in prenatal care that aimed to improve infant health by reducing instances of appalling birth defects such as spina bifida and anencephaly.

At the dawn of the 21st century, the challenges kept coming, and CDC kept stepping up to the plate. The perceived global electronic disaster of Y2K and the actual disaster on September 11, 2001, at the World Trade Center and Pentagon required assistance from Centers for Disease Control. Natural disaster response, a part of CDC tradition since the 1940s, has also kept the agency staff involved as first responders during national and international catastrophes.

The agency’s name was changed by Congress one final time in 1992 to Centers for Disease Control and Prevention, and as of today, CDC is part of the US Department of Health and Human Services. It has grown to include over 15 centers plus numerous offices and branches.

Since 1946, CDC has utilized scientific and epidemiological expertise to help people around the world enjoy healthier, safer, longer lives. It is recognized today for its scientific research, investigations, and application of its findings to improve people’s daily lives and to respond to health emergencies—something that sets CDC apart from its peer agencies. As the The Centers for Disease Control & Prevention moves forward in the 21st century, it has five strategic areas of focus: providing support to state and local health departments, continuing to look for ways to improve global health, implementing measures to decrease leading causes of death, strengthening surveillance and epidemiology, and endeavoring to reform health policies.

LEARN MORE: READ THE BOOK

For an in-depth look at history of the CDC as well as photos and stories from its esteemed history, read Bob Kelley’s Images of America: Centers for Disease Control and Prevention from Arcadia Publishing.

Inside the book you’ll find a decade-by-decade history of the department:

1. Before CDC: America’s First Health Responders

2. The 1940s: The Battle Against Malaria Gives Rise to CDC

3. The 1950s: The “War Baby” Grows Up

4. The 1960s: New Challenges Shape CDC’s Future

5. The 1970s: CDC Grows, Creates New Prevention Strategies

6. The 1980s: The Global Village Is Invaded by AIDS

7. The 1990s: CDC Becomes a Global Force

8. The 2000s: A New Century with New Challenges

GET THE BOOK TODAY!